Want to make search engines more aware of yourWordPressWebsite? Properly setting up a robots.txt file is key! This inconspicuous file is like the map navigation of the website, which can intelligently guide the search engine to crawl the important content and avoid the irrelevant pages, which can reduce the burden on the server and improve the SEO effect. This article will useYoast SEOPlugin easy to configure robots.txt, so that the crawler accurate crawl, optimize the effect of website inclusion.

![Image[1]-Yoast SEO with robots.txt: How to configure the crawler rules correctly?](https://www.361sale.com/wp-content/uploads/2025/06/20250630135315420-image.png)

1. what is robots.txt?

robots.txt It is a plain text file in the root directory of a website that is used to tell search engine crawlers which pages can be crawled and which pages are forbidden to be crawled.

The basic syntax includes:

User-agent: [Crawler name]

Disallow: [Paths that are forbidden to be crawled]

Allow: [Allowed paths]Example:

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.phpThis means that all crawlers are forbidden to crawl the wp-admin backend, but are allowed to crawl the admin-ajax.php file.

2. why is robots.txt configuration important?

- Enhancement of Crawling Efficiency

Prevent crawlers from wasting crawl budget on duplicate or worthless pages.

- Protection of private content

Block search engines from accessing sensitive paths, such as the backend or system files.

- Preventing Duplicate Content Inclusion

Used in conjunction with noindex, it avoids the impact of duplicate or meaningless pages. SEOThe

However, be aware that robots.txt is just a "request" for crawlers, and some malicious crawlers may ignore its rules.

3. Yoast SEO in relation to robots.txt

Yoast SEO itself does not generate robots.txt files directly, but it does provide a convenient entry point for editing robots.txt without having to go through the FTP Modify the server files.

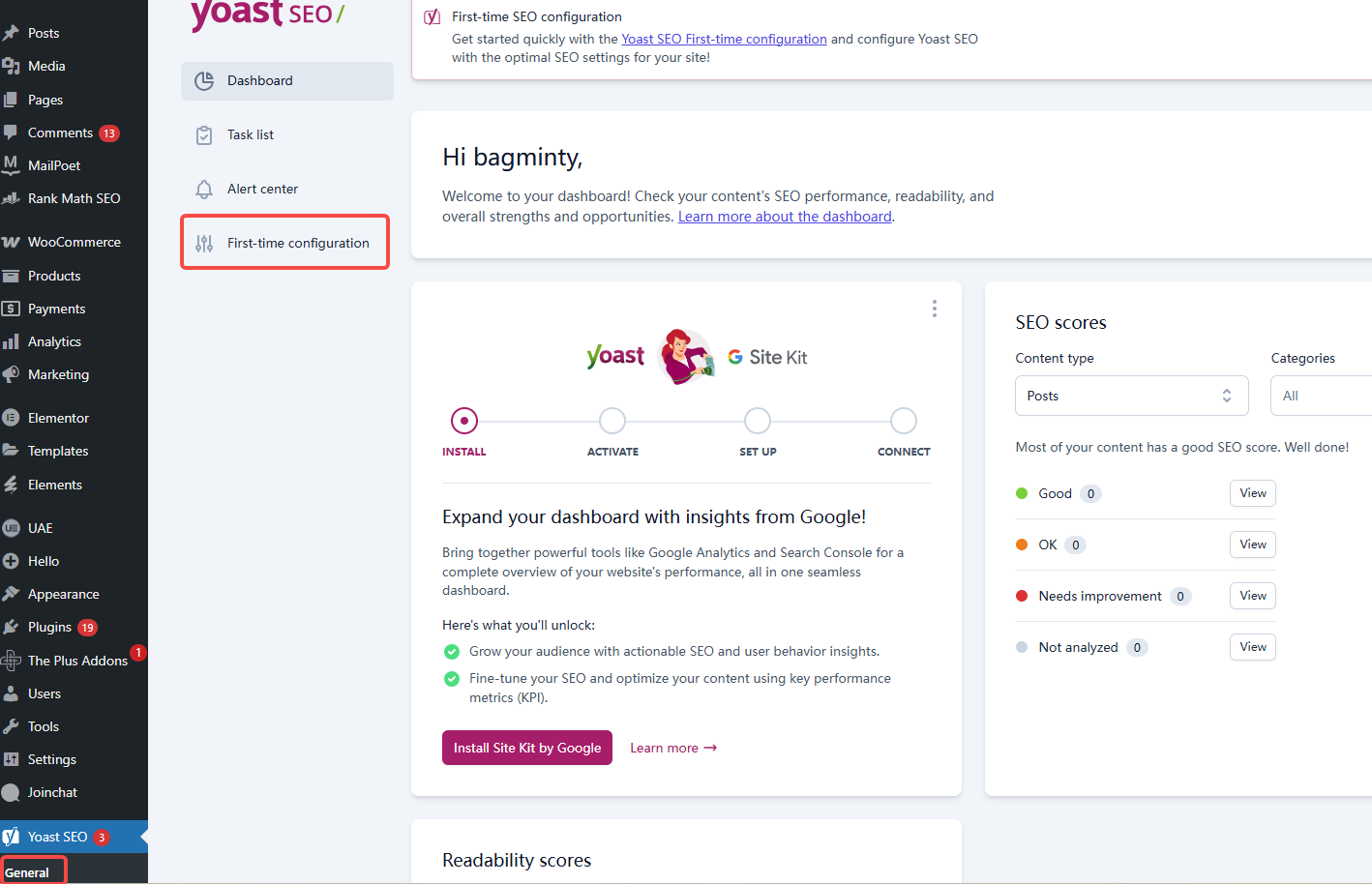

How to edit robots.txt via Yoast SEO?

- Login to WordPress Backend

- Click on the left menu Yoast SEO > Tools

![Image [2]-Yoast SEO with robots.txt: How to properly configure crawler rules?](https://www.361sale.com/wp-content/uploads/2025/06/20250630135842634-image.png)

- Select File editor

![Image [3]-Yoast SEO with robots.txt: How to configure the crawler rules correctly?](https://www.361sale.com/wp-content/uploads/2025/06/20250630135911851-image.png)

- If your WordPress With write access, you will see the robots.txt edit box

![Image [4]-Yoast SEO with robots.txt: How to properly configure crawler rules?](https://www.361sale.com/wp-content/uploads/2025/06/20250630135933982-image.png)

- Enter your crawler rules here and click [Save Changes] to take effect!

If the prompt cannot be edited, you need to create the robots.txt file manually via FTP or your hosting control panel.

4. robots.txt configuration common example

Below are examples of common WordPress robots.txt configurations that can be adapted to the structure of your site:

4.1 Basic configuration

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.phpThis configuration disables the crawler from crawling the backend directory, but allows the Ajax Request for documents.

4.2 Masking the plug-in directory

If you wish to hide the plugin directory, you can add it:

Disallow: /wp-content/plugins/However, it is generally not recommended to disable them completely unless it is determined that these paths do not affect front-end functionality.

4.3 Allow full capture

If you want the crawler to crawl all the content of the site:

User-agent: *

Disallow.4.4 Masking tags and search result pages

tags (/tag/) and on-site search results pages usually have a strong influence on the SEO Crawling can be disabled if it is of low value and prone to duplicate content:

Disallow: /?s=

Disallow: /tag/Note: If noindex has been set for these pages, the crawl can also be preserved to avoid the Google Search Console Warning "noindex page blocked by robots.txt".

5. Best practices for configuring robots.txt

- Develop rules based on the actual situation and avoid blindly copying others' configurations

- Do not prohibit CSS with JS file crawling, otherwise it will affect the search engine's evaluation of page layout and mobile adaptation

- In conjunction with the sitemap.xml submission, add the sitemap link in robots.txt, for example:

![Image [5]-Yoast SEO with robots.txt: How to properly configure crawler rules?](https://www.361sale.com/wp-content/uploads/2025/06/20250630194157501-image.png)

Sitemap: https://www.yoursite.com/sitemap_index.xml

- Once configured, use Google Search Console's robots.txt testing tool to check that it meets expectations

- become man and wife Yoast SEO noindex setting for flexible management of page indexing state

6. Common configuration errors

- Mistakenly Disallowing the whole site, resulting in the site not being indexed

- Disable crawling of wp-content directory, causing style and script loading exceptions

- Relying on robots.txt to block private content without password protection is a security risk.

summarize

robots.txt is a fundamental part of your website's SEO strategy, and with Yoast SEO's file editing feature, you can easily manage the robots.txt,正确引导搜索引擎爬虫,提升抓取效率与整体 SEO 表现。

Link to this article:https://www.361sale.com/en/64022The article is copyrighted and must be reproduced with attribution.

![Emoji[wozuimei]-Photonflux.com | Professional WordPress repair service, worldwide, rapid response](https://www.361sale.com/wp-content/themes/zibll/img/smilies/wozuimei.gif)

![Emoticon[baoquan] - Photon Wave Network | Professional WordPress Repair Services, Worldwide Coverage, Rapid Response](https://www.361sale.com/wp-content/themes/zibll/img/smilies/baoquan.gif)

No comments